Researcher Mallari Shroff examines how artificial intelligence is shaping school admissions, grading, and exam monitoring — and whether Europe’s new AI law is enough to ensure fairness.

Mallari Shroff

AI Policy & Social Data Science Researcher | Dublin, Ireland

Editor’s Note

This article is part of the Policy Analysis series of TheNews21, where researchers and experts examine emerging global issues in technology, governance, and society.

In this analysis, researcher Mallari Shroff explores how artificial intelligence systems are already influencing decision-making in European schools and evaluates whether the European Union’s new AI regulatory framework can adequately address concerns around bias, accountability, and fairness.

POLICY BRIEF | AI GOVERNANCE & EDUCATION

European Schools Are Already Using AI to Judge Children.

Is Anyone Checking If It’s Fair?

EXECUTIVE SUMMARY

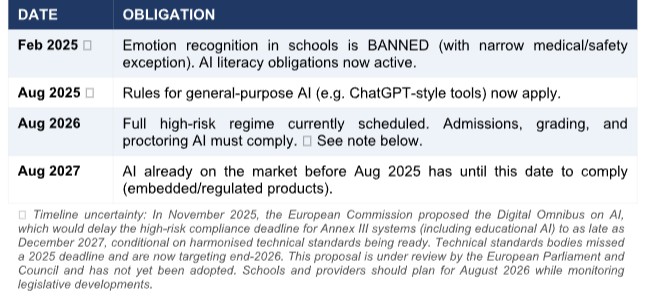

AI systems are already deciding which students gain entry to school programmes, how their work is graded, and whether they cheated during an exam, and in most European schools, nobody is checking whether those decisions are fair. Research shows these systems consistently disadvantage students based on race, gender, socioeconomic background, and disability (Baker & Hawn, 2022). The EU AI Act (2024) is the first law anywhere in the world to classify educational AI as high-risk, imposing real legal obligations on providers and schools. Schools face a compliance deadline currently set for August 2026, though this may be extended under proposed amendments (see timeline section).

Compliance with the Act is necessary but not sufficient. The law does not evaluate whether AI helps students learn, it cannot prevent technically compliant systems from reinforcing inequality, and it does not create the teacher capacity needed to exercise the oversight it requires. This brief argues that governments, schools, and AI providers must act now, before any compliance deadline, to close these gaps and ensure the Act delivers real protection, not just legal cover.

THE PROBLEM AND WHY IT CANNOT WAIT

AI is already embedded in European schools at all levels, and its consequences are already falling unevenly. Algorithms help determine who is admitted to programmes, measure whether students are learning and monitor students during exams for signs of misconduct. These are not peripheral administrative functions; they are decisions that shape which children get access to which opportunities.

The harm is documented.

Baker & Hawn (2022), in a systematic review of the empirical literature, found that AI tools in education consistently disadvantage students based on race, gender, socioeconomic background, and disability, not through deliberate design, but because the data these systems learn from reflects existing inequalities, and those inequalities get reproduced at scale. Automated essay scoring penalises students from lower-income backgrounds. Predictive tools that flag students as ‘at risk’ reinforce racial disparities in how schools allocate support. AI proctoring systems, widely adopted during the COVID-19 pandemic, disproportionately flagged students of colour for alleged cheating.

Until August 2024, there was no legal framework governing any of this. Schools and technology providers operated without standardised obligations, without required bias testing, and without any formal accountability when systems went wrong. Students had no recourse. That has now changed, but whether the change delivers real protection depends on decisions that have not yet been made. It is also worth noting that existing data protection law (GDPR) provides some individual rights around automated decision-making but does not address the structural bias and pedagogical harms described here. The AI Act fills a gap that GDPR was never designed to close.

WHAT IS AT STAKE: These are not edge cases. AI tools affecting admissions, grading, learning pathways, and exam integrity are in active use across EU member states. Bias in these systems does not produce random errors; it produces systematic errors that fall hardest on students who already face the most barriers.

WHAT HAS CHANGED: THE EU AI ACT

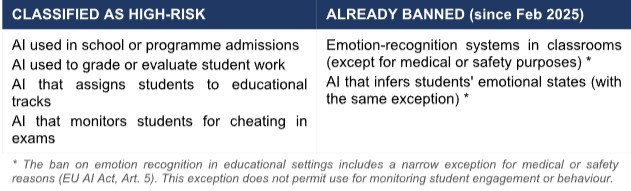

The EU AI Act (Regulation (EU) 2024/1689) classifies four categories of educational AI as HIGH-RISK, meaning they face strict legal obligations rather than outright bans:

High-risk classification means providers and schools must meet demanding requirements: ongoing risk management; bias testing in training data; technical documentation; operation logs for audit; and, critically, human oversight that allows qualified staff to review and override AI outputs. The obligations take effect on a phased timeline:

POLICY ALTERNATIVES: THREE APPROACHES, ONE CLEAR CHOICE

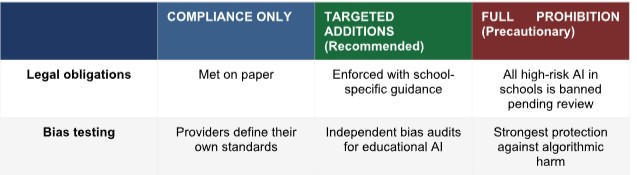

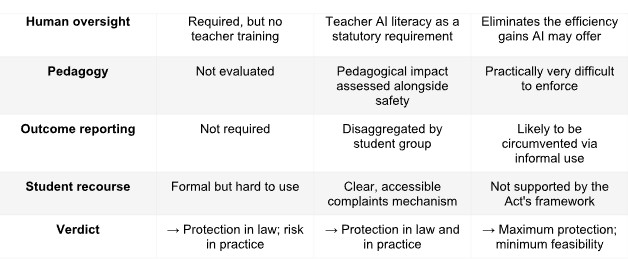

Policy-makers face a genuine choice: not whether to implement the Act, but how much further to go. Three approaches are available. Only one protects students in practice, not just on paper.

Full prohibition would be the strongest protection, but it is neither feasible nor supported by the Act’s own framework, which draws a deliberate distinction between prohibited and high- risk uses. Compliance alone is achievable, but leaves three gaps that will predictably produce harm:

- The Act tests safety, not learning. A system can satisfy every legal requirement while offering no demonstrable educational benefit. UNESCO found that most claims about AI’s educational impact are based on conjecture, speculation, and optimism and that robust evidence remains scarce (Miao et al., 2021). Schools deserve to know whether the tools they are required to oversee work.

- Bias survives compliance. Baker & Hawn (2022) document that technically sound AI systems consistently reproduce inequality. Bias enters through outcome variable definition and feature selection, not just training data, meaning standard data governance requirements do not catch it. Without independent auditing, compliant systems will keep producing discriminatory results.

- Human oversight is meaningless without human capacity. The Act requires qualified staff to meaningfully review and override AI outputs. Most schools currently have no teachers with the training to do this (Miao et al., 2021). The legal right to oversight exists; the practical ability to exercise it does not.

The targeted additions approach addresses all three gaps without departing from the Act’s framework. It is this brief’s recommended course of action.

POLICY RECOMMENDATIONS

The following recommendations are specific, actionable, and grounded in the evidence. They are addressed to the three groups with the most direct power to act.

For the European Commission and national governments

- Publish school-specific guidance without further delay. The Commission was legally required under Article 6 to publish high-risk classification guidance by February 2026 — and missed that deadline. This failure makes school-specific guidance more urgent, not less. Guidance must go beyond generic compliance templates and include concrete examples for educational institutions at all levels, covering admissions tools, adaptive learning platforms, and proctoring systems. Schools cannot prepare for obligations they cannot interpret.

- Make teacher AI literacy a statutory requirement. The Act’s human oversight provisions fail if teachers cannot interrogate algorithmic outputs. National education ministries should legislate AI literacy as a core component of both initial teacher education and continuing professional development, with ring-fenced funding. This is not a technology issue; it is a professional competency issue.

- Fund independent bias auditing for educational AI. Provider self-certification is insufficient given documented evidence that compliant systems can still harm students (Baker & Hawn, 2022). An independent audit mechanism, analogous to a financial audit, should be established for high-risk educational AI, with mandatory reporting of outcomes disaggregated by race, gender, socioeconomic status, and disability. Audit findings should be publicly accessible.

- Require evidence of educational effectiveness, not just technical safety. Work with national research bodies to develop a complementary framework requiring providers to demonstrate that their systems benefit students, not just that they pass technical safety checks. A system that is safe but ineffective is still a problem for the students using it.

- Establish a student-facing complaints mechanism. Students and parents currently have no clear, accessible route to challenge AI-driven decisions. A complaints pathway modelled on existing GDPR supervisory authority structures, simple, accessible, and with binding authority, should be established before the high- risk regime takes effect.

For school principals and governing bodies

- Start your AI audit now. Identify every AI tool currently in use in your school, including those embedded in commercial platforms and learning management systems. Determine which fall under the Act’s high-risk classifications. A meaningful audit takes time, and the compliance deadline, whether August 2026 or later under the proposed Digital Omnibus, does not allow for a last-minute scramble.

- Demand documentation from every AI provider before renewing contracts. Any provider unable to produce the Act’s required technical documentation, like risk assessments, bias evaluations, and operation logs, should not be contracted for high-stakes educational decisions. Make documentation a procurement condition, not an afterthought.

- Build real oversight capacity. Designating a staff member as ‘AI lead’ on paper is not enough. That person must have substantive technical and pedagogical training, protected time in their timetable, and genuine authority to challenge and override AI outputs. Oversight that exists only in a job title protects nobody.

For AI developers and EdTech providers

Note: Developers operate under market incentives that policy cannot fully control. The recommendations below

are therefore framed primarily as criteria for public procurement decisions, which government bodies have direct

power to enforce.

- Test for bias at every stage of the pipeline and publish results as a procurement condition. Bias enters through outcome variable definition, feature selection, and deployment context, not only through training data (Baker & Hawn, 2022). Disaggregated performance testing by demographic group at each stage should be a minimum condition for public sector procurement. Schools cannot exercise informed oversight over systems whose bias profiles are withheld.

- Represent the evidence base honestly in procurement materials. UNESCO’s review of the field found widespread overstatement of AI’s educational effectiveness (Miao et al., 2021). Procurement processes should require providers to distinguish between independently replicated findings and internal evaluations. Schools are making high-stakes decisions on the basis of marketing claims that the evidence does not support.

SOURCES

Baker, R. S., & Hawn, A. (2022). Algorithmic bias in education. International Journal of Artificial Intelligence in Education, 32(4), 1052–1092.

https://doi.org/10.1007/s40593-021-00285-9

European Commission. (2025, November 19). Proposal for a regulation on the simplification of the implementation of harmonised rules on artificial intelligence (Digital Omnibus on AI). https://digital strategy.ec.europa.eu/en/policies/regulatory- framework-ai

European Parliament & Council of the European Union. (2024). Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Official Journal of the European Union, L, 1–144. https://eur- lex.europa.eu/eli/reg/2024/1689/oj/eng

Holmes, W., Porayska-Pomsta, K., Holstein, K., Sutherland, E., Baker, T., Buckingham Shum, S., Santos, O. C., Rodrigo, M. T., Cukurova, M., Bittencourt, I. I., & Koedinger, K. R. (2022). Ethics of AI in education: Towards a community-wide framework. International Journal of Artificial Intelligence in Education, 32(2), 504–526. https://doi.org/10.1007/s40593-021-00239-1

Miao, F., Holmes, W., Huang, R., & Zhang, H. (2021). AI and education: Guidance for policy-makers. UNESCO. https://doi.org/10.54675/PCSP7350

Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education — where are the educators? International Journal of Educational Technology in Higher Education, 16, Article 39. https://doi.org/10.1186/s41239-019-0171-0

(This article is part of TheNews21 Policy Analysis series exploring the global governance challenges of emerging technologies.)